Migrating Databases with Rails at Scale

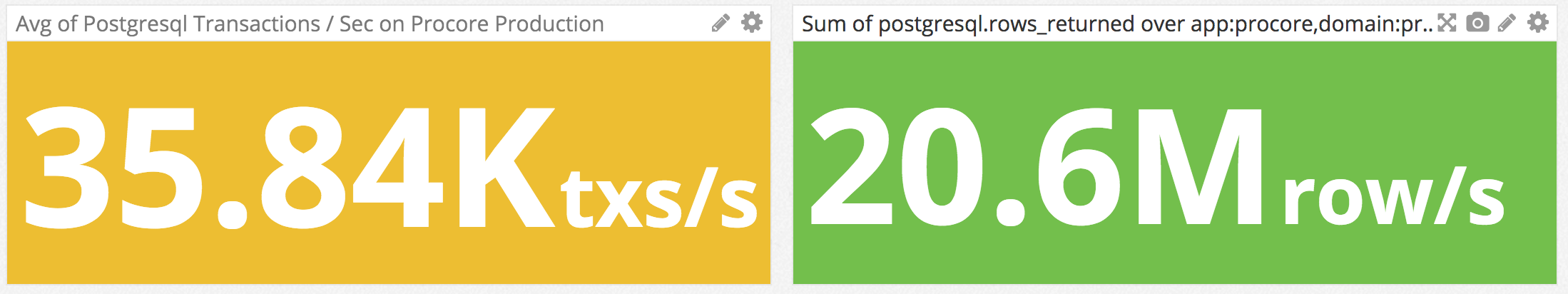

At Procore, PostgreSQL is our workhorse. The nature of our product - a very large suite of enterprise business management tools for the construction industry - requires a fully normalized relational database with over 600 tables, and we keep adding more. Our traffic peaks predictably every weekday morning as an average of 80,000 construction industry professionals log in and use Procore throughout their day. It's not unusual for our database to perform 35,000 transactions / second and scan 20,000,000 rows a second sustainedly for several hours every morning.

At the same time, our engineering team is hard at work shipping the new features that make us the leader in our industry. Our focus on agile and iterative development encourages ongoing improvements to our schema as we add tables and columns, migrate data to new schemas, and add indexes to improve performance. To maximize our productivity, we've designed a process for running migrations that allows any of our squads to deploy and migrate at any time of the day, regardless of the load on our database. Successfully running migrations on a huge database at peak load required a carefully formulated migration strategy and a bit of custom tooling as well.

The Challenge

PostgreSQL is an ACID compliant database. Among other things, the C in ACID (which stands for Consistent) means that if you define constraints on your database - such as foreign keys, unique indexes and NOT NULL columns - it is guaranteed to enforce those constraints. When you update data or make changes to your schema, sometimes the database has to lock rows or whole tables in order to remain consistent. Locking under heavy load can cause web requests to queue up and can cause your site to go offline if not done carefully. The primary goal of our migration strategy, therefore, is to minimize locking to the greatest extent.

As an example, take the seemingly simple task of adding a new column with a NOT NULL constraint, and a default value:

We have two million users in this table, and every web request reads from it at least once, making it the most contested table in our database. Running this migration would lock the users tables for the length of time it takes to write false into the is_admin column on every one of those million plus rows. This would more than likely cause pages to timeout, effectively taking down our site right when our customers need it the most (and that's only if it manages to acquire the lock necessary to add this column in the first place).

Performing this migration successfully requires breaking it up into multiple migrations - five in this case - to minimize the time the table is locked. Because of the way ActiveRecord caches the schema metadata from our tables, during our deployment, some of those 5 migrations must run before our server has restarted and the new code has taken effect. After that, some of them must run after a restart.

Managing this complexity requires some custom tooling, specifically a Ruby gem we wrote called handcuffs (pull requests welcome!). Handcuffs allows migrations to be tagged so they can automatically be run in phases during complex multi-part migrations. Performing the add-column operation above at scale requires that it be broken up into the following 5 migrations, where we use tactics such as updating in batches, and adding temporary index to minimize locking and prevents schema caching issues:

In the above migrations, you can see phase declarations, such as phase :post_restart. Each phase can be run with a rake task, such as rake handcuffs:migrate[post_restart]. Our deployment scripts (controlled by a nifty Slackbot named Sherpa) run these tasks at the proper time during the deployment allowing us to successfully avoid certain pitfalls related to schema caching. This ensures the site stays up and our developers are able to release features all day, every day whenever they're ready to ship.

If you're wondering about the details of how database locking works and want to know why the above 5 part migration strategy is necessary to minimize locking, I gave a talk about it at Scale15x in Los Angeles last March, which you can view here (the video purposefully starts at 3:43 when my talk begins):

As you can see, the scale we run at adds quite a bit of complexity to what is usually very simple. If you're interested in this subject and have questions or comments, I'd love to hear from you on Twitter. Just mention @ProcoreEng or me, @BradUrani. If you'd like to work on tough engineering problems like this, we'd love to talk to you about career opportunities at Procore. We're currently hiring for over a dozen different roles within our engineering department, so check out our job listings and reach out to us!